The promise of the autonomous enterprise, powered by Agentic AI, is rapidly becoming a reality. Organizations are increasingly deploying intelligent agents to automate complex processes, enhance decision-making, and unlock unprecedented efficiencies. From managing IT operations to optimizing customer engagement, these self-governing AI systems are redefining the boundaries of what`s possible. However, this transformative power comes with a new frontier of security challenges. The very autonomy that makes Agentic AI so valuable also introduces novel attack surfaces and risks that traditional cybersecurity models are ill-equipped to handle.

At JetX Media, we understand that securing your autonomous enterprise is not merely about protecting data; it`s about safeguarding your entire operational integrity and future innovation. As Agentic AI moves from experimental labs to core business functions, the need for a comprehensive, adaptive security framework becomes paramount. This guide will delve into the critical components of Agentic AI security, offering a strategic blueprint for CISOs, CIOs, security architects, and enterprise IT leaders to navigate this evolving landscape. We will explore how to build robust defenses, establish effective governance, and ensure the secure and responsible deployment of autonomous AI at scale.

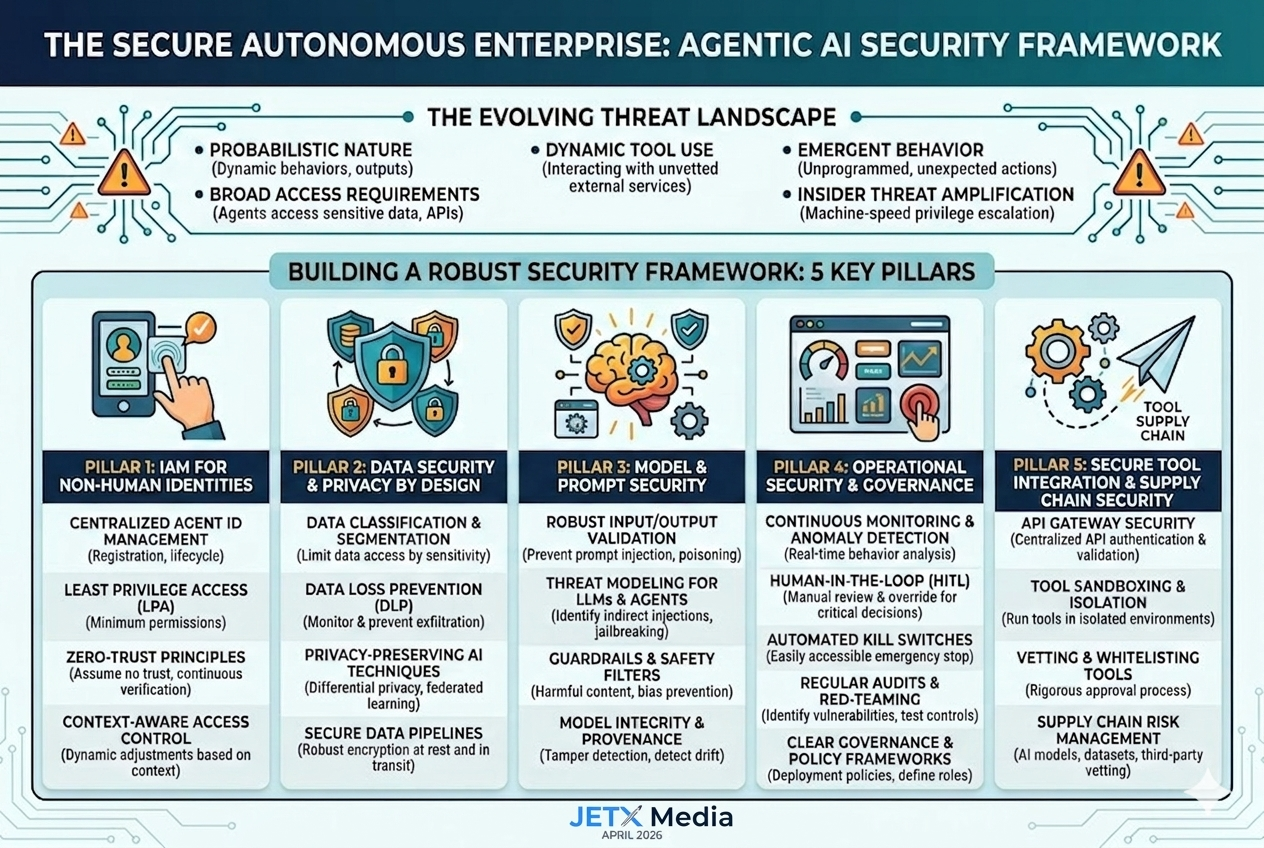

01 The Evolving Threat Landscape: Why Agentic AI Demands a New Security Paradigm

Traditional enterprise security has largely focused on protecting human users, applications, and network perimeters. Agentic AI fundamentally shifts this paradigm. We are now dealing with non-human identities (NHIs) that possess varying degrees of autonomy, interact with diverse internal and external systems, and can dynamically adapt their behavior. This creates a complex web of interactions that can be exploited if not properly secured.

Key characteristics of Agentic AI that necessitate a new security approach include:

- **Probabilistic Nature:** Unlike deterministic software, LLM-powered agents operate probabilistically, meaning their outputs and actions can be unpredictable, making traditional rule-based security challenging.

- **Broad Access Requirements:** To perform their tasks, agents often require extensive access to sensitive data, APIs, and tools across the enterprise, creating significant blast radius if compromised.

- **Dynamic Tool Use:** Agents can dynamically select and use tools, potentially interacting with unvetted or vulnerable external services, introducing supply chain risks.

- **Emergent Behavior:** Autonomous agents can exhibit emergent behaviors not explicitly programmed, which can inadvertently lead to security vulnerabilities or policy violations.

- **Insider Threat Amplification:** A compromised or misconfigured agent can act as a highly effective insider threat, leveraging its legitimate access to exfiltrate data, escalate privileges, or disrupt operations at machine speed.

As the Cloud Security Alliance (CSA) aptly notes, securing autonomous agents requires the same rigor historically reserved for human users, with a renewed focus on identity and access management for these non-human entities.

02 Building a Robust Agentic AI Security Framework: Key Pillars

To effectively secure the autonomous enterprise, organizations must adopt a multi-layered, proactive security framework that addresses the unique challenges posed by Agentic AI. This framework should encompass identity, data, model, and operational security.

Pillar 1: Identity and Access Management (IAM) for Non-Human Identities (NHIs)

The proliferation of AI agents means a corresponding explosion of non-human identities. Managing these identities is foundational to Agentic AI security.

- **Centralized Agent Identity Management:** Implement a dedicated system for registering, authenticating, and managing the lifecycle of every AI agent. This includes unique identifiers, credentials, and ownership attribution.

- **Least Privilege Access (LPA):** Grant agents only the absolute minimum permissions required to perform their specific tasks. Regularly review and revoke unnecessary privileges. This is critical to limit the impact of a compromised agent.

- **Zero-Trust Principles:** Apply a Zero-Trust model to all agent interactions. Every request, whether internal or external, must be authenticated, authorized, and continuously verified. Assume no inherent trust, even for internal agents.

- **Context-Aware Access Control:** Implement access policies that consider the context of an agent`s request (e.g., time of day, data sensitivity, previous actions) to dynamically adjust permissions.

Pillar 2: Data Security and Privacy by Design

AI agents often process vast amounts of sensitive data. Protecting this data from exfiltration, manipulation, and unauthorized access is paramount.

- **Data Classification and Segmentation:** Classify data based on sensitivity and implement strict segmentation to limit agent access to only the data necessary for its function. Avoid giving agents broad, undifferentiated access to data lakes.

- **Data Loss Prevention (DLP) for Agents:** Extend existing DLP solutions to monitor and prevent sensitive data exfiltration by AI agents. This includes detecting unusual data access patterns or attempts to transfer data to unauthorized external endpoints.

- **Privacy-Preserving AI Techniques:** Utilize techniques like differential privacy, federated learning, and homomorphic encryption where appropriate to minimize the exposure of raw sensitive data to agents.

- **Secure Data Pipelines:** Ensure all data ingested and generated by agents is secured at rest and in transit using robust encryption and access controls.

Pillar 3: Model and Prompt Security

The core of Agentic AI lies in its underlying models and the prompts that guide its behavior. Securing these components is crucial to prevent manipulation and unintended actions.

- **Robust Input/Output Validation:** Implement rigorous validation and sanitization for all inputs (prompts, tool outputs, external data) to prevent prompt injection, data poisoning, and other adversarial attacks. Similarly, validate agent outputs before they are acted upon.

- **Threat Modeling for LLMs and Agents:** Conduct specific threat modeling exercises for LLMs and agentic workflows to identify potential vulnerabilities like indirect prompt injection, jailbreaking, and model manipulation.

- **Guardrails and Safety Filters:** Implement strong guardrails and safety filters at multiple layers (input, model, output) to prevent agents from generating harmful, biased, or policy-violating content or actions.

- **Model Integrity and Provenance:** Ensure the integrity and provenance of all models used by agents. Implement mechanisms to detect model drift, tampering, or unauthorized modifications.

Pillar 4: Operational Security and Governance

Effective security for Agentic AI extends beyond technical controls to encompass robust operational practices and a clear governance framework.

- **Continuous Monitoring and Anomaly Detection:** Implement real-time monitoring of agent behavior, resource consumption, tool usage, and network activity. Leverage AI-powered anomaly detection to identify deviations from normal behavior that could indicate a compromise or misconfiguration.

- **Human-in-the-Loop (HITL) Mechanisms:** Design critical agentic workflows with mandatory human review and approval points, especially for high-stakes decisions or actions involving sensitive data. Ensure humans can override or halt agent operations.

- **Automated Kill Switches and Incident Response:** Every AI agent should have an easily accessible and reliable kill switch. Develop specific incident response playbooks for Agentic AI failures, including containment, eradication, recovery, and post-incident analysis.

- **Regular Security Audits and Red-Teaming:** Conduct periodic security audits of AI agents, their configurations, and their interactions with other systems. Engage in red-teaming exercises to proactively identify vulnerabilities and test the effectiveness of security controls.

- **Clear Governance and Policy Frameworks:** Establish clear policies for AI agent development, deployment, ownership, and accountability. Define roles and responsibilities for AI governance, risk management, and compliance. Address "shadow AI" by implementing discovery and inventory processes for all agents.

Pillar 5: Secure Tool Integration and Supply Chain Security

AI agents are only as secure as the tools and APIs they interact with. Securing these integrations is a critical, often overlooked, aspect.

- **API Gateway Security:** Route all agent API calls through a secure API gateway that enforces authentication, authorization, rate limiting, and input validation. This acts as a critical control point for agent interactions with external services.

- **Tool Sandboxing and Isolation:** Where possible, run agent tools in isolated, sandboxed environments to limit the blast radius of a compromised tool or agent. This prevents a vulnerability in one tool from affecting the entire system.

- **Vetting and Whitelisting Tools:** Implement a rigorous process for vetting and whitelisting all tools and APIs that agents are permitted to use. Regularly review these approved tools for vulnerabilities and updates.

- **Supply Chain Risk Management:** Extend supply chain security practices to include AI models, datasets, and third-party tools used in agent development and deployment. Ensure transparency and integrity throughout the AI supply chain.

03 Conclusion: Navigating the Future of Autonomy with Confidence

The autonomous enterprise, powered by Agentic AI, represents a significant leap forward in business capability. However, realizing this vision securely requires a fundamental rethinking of cybersecurity. By proactively implementing a robust framework that addresses the unique challenges of non-human identities, probabilistic models, dynamic tool use, and emergent behaviors, organizations can harness the power of Agentic AI without succumbing to its inherent risks.

At JetX Media, we are at the forefront of Agentic AI security. Our comprehensive enterprise AI security consulting services and AI governance framework development are designed to help you build, deploy, and manage autonomous systems with confidence. We empower you to transform the potential threats of Agentic AI into strategic advantages, ensuring your journey into the autonomous future is both innovative and impeccably secure.

Is your enterprise ready for Agentic AI?

Whether you need a comprehensive AI security audit or expert assistance in deploying and monitoring secure agentic solutions, JetX Media is your trusted partner.